My first thoughts were, “Wow, what great coverage! A well-written piece that highlights my content. Other leading media groups are using my material to highlight and summarize my research and offer shortened versions for easier consumption.”

Sure enough, the flow, ideas, focus on AI, main predictions and more were all taken from my blog — even if some items were slightly reworded. For example,

I start with:

“Every December, global experts examine trends and international focus areas for the next 12 months and beyond. For 2024, top topics range from upcoming elections to regional wars to space exploration to advances in AI. And with technology playing a more central role in every area of life, annual cybersecurity prediction reports, cyber industry forecasts and advanced research on cyber threat trends and data breaches are more important than ever before.”

The MSN.com article from Cryptopolitan begins with:

“In the ever-evolving landscape of cybersecurity, experts predict a myriad of challenges and advancements for the upcoming year. As 2024 approaches, global attention is drawn to crucial topics such as elections, regional conflicts, space exploration, and the pervasive influence of artificial intelligence (AI). Annual cybersecurity prediction reports have become indispensable tools for navigating the intricate web of cyber threats and data breaches.”

I must admit that this other cybersecurity prediction article is well-written, and in some ways may even be more clear than my security prediction blog for the first 900 words. Of course, this MSN.com version is much shorter, did not have my vendor-specific lists, does not link to the other industry reports that I reference and/or add other materials (such as videos) contained in my blog.

You can read the two pieces yourself, but you will quickly see how all the main points from “Unveiling the Top 24 Security Predictions for 2024 (Part 1)” are lifted straight from my article, “The Top 24 Security Predictions for 2024 (Part 1).” Even the title is the same, with the addition of the word “Unveiling.”

But: There is no attribution, reference, hyperlink or any other pointer to my original article.

I reached out to the author Derrick Clinton, who is described on his author page as a “freelance writer with an interest in blockchain and cryptocurrency. He works mostly on crypto projects' problems and solutions, offering a market outlook for investments.”

Initially, he did not respond. After a few weeks, we had a conversation on LinkedIn.

I wrote: “You need to add a reference to my original article in all of your syndicated versions of this article. I am not seeking compensation, only proper recognition for writing the material.”

Clinton responded: “I am just a writer from Cryptopolitan given a chance to research and write on trending news and articles. I've temporarily taken down the article for internal discussion with management. Upon resolution, I'll promptly notify you to publish it again with you tagged. I appreciate your patience. In a week, I'll address any resolution and article will go live again.”

WHAT HAPPENED NEXT?

After this conversation, I went to the original link at the Cryptopolitan website, and sure enough I received the message: “Page not found.” (Thank you, Derrick, for taking down that article for review.)

However, when I visited other versions of the same article at many of the syndicated websites, the article was still live. For example, at the time I am writing this blog, the article can still be found at websites like this: https://coinmarketcap.com/community/articles/657f39743d78101767c63f96/.

The article is also still available at several other syndicated websites.

While I am hopeful that this particular article situation will be resolved with Derrick Clinton and my annual prediction piece will get the proper credit it deserves, I am writing about this example to illustrate a much wider set of issues to consider as we move forward into 2024 and beyond.

COPYRIGHT LAW AND PLAGIARISM IN THE AGE OF GenAI

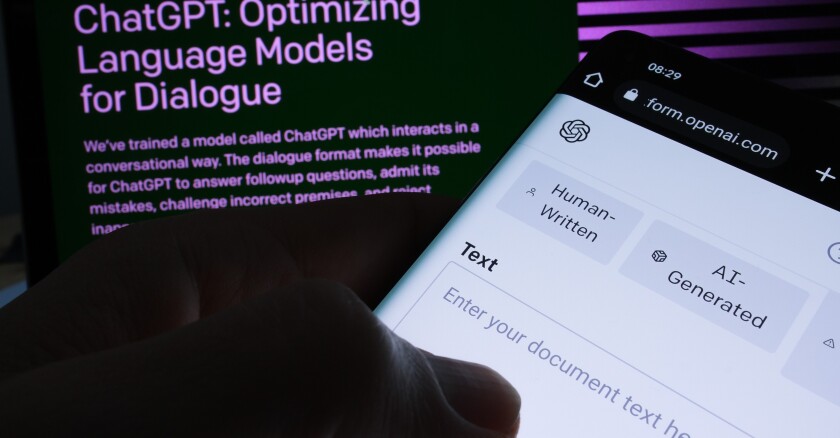

There are many new copyright law and plagiarism questions surfacing that generative artificial intelligence (GenAI) technology and new tools are highlighting. Here are a few:

First, there are major issues making global headlines revolving around copyright, such as this story: “The New York Times sues Microsoft, ChatGPT maker OpenAI over copyright infringement.” The issues include: “The publisher said in a filing in the U.S. District Court for the Southern District of New York that it seeks to hold Microsoft and OpenAI to account for the 'billions of dollars in statutory and actual damages' it believes it is owed for the 'unlawful copying and use of The Times’s uniquely valuable works.'

“The Times said in an emailed statement that it ‘recognizes the power and potential of GenAI for the public and for journalism,’ but added that journalistic material should be used for commercial gain with permission from the original source.”

This topic is further described in this Fast Company article: “The New York Times’s OpenAI lawsuit could put a damper on AI’s 2024 ambitions.” Here's an excerpt:

“The suit marked a sobering coda to 2023, a year in which the AI industry sprinted forward unrestrainedly, and mostly without regulation. Many in the tech industry had hoped that 2024 would bring far wider application of AI systems. But lawsuits over copyrights could slow everything down, as legal exposure concerns become a bigger factor in AI companies’ plans for how and when to release new models. Could training data—not safety concerns or job destruction fears—become the AI industry’s Achilles’ heel?

“The OpenAI lawyers may argue that an AI model isn’t much different from a human who ingests a bunch of information from the web then uses it as a basis for their own thoughts. That whole debate may be moot if the Times can prove that it was financially harmed when OpenAI’s and Microsoft’s AI models spat out line-for-line text lifted from the paper’s coverage. But the main issue is that this is all uncharted legal territory; a high-profile trial may begin to establish how copyright law applies to the training of AI models. Even if OpenAI ends up paying damages, the two parties may still come to an accommodation allowing the AI company to use Times content for training.”

Here are a few questions related to my example presented earlier in this article:

- What (or who) will stop someone from summarizing a long article by just dropping it into ChatGPT or Bard or some other GenAI tool, and publishing the contents as their own original article? For example: Drop a 2,000 word article into ChatGPT and ask it to summarize the article in 700 words.

- What responsibilities do GenAI tools have for checking for copyright protections and against plagiarism?

- What responsibilities do authors and syndication network editors have for checking for copyright protections and against plagiarism? (For example: Should MSN.com have checked that this content was original before publishing it on their platform?)

FINAL THOUGHTS

I found my 2024 cybersecurity prediction content in the MSN article last month largely because the syndicated article did not even change my title. Had this article been called “Cybersecurity Trends for 2024,” I may never have found the plagiarism, due to the minor rewording used in the new piece. No doubt, there will be tools developed that allow people to copy the ideas and content of others — without being flagged as plagiarism.

There are plenty of other implications to GenAI’s ability to create new media content and offer timely updates to global stories. No doubt, we will all benefit from these new GenAI technologies in 2024 and beyond.

However, I offer a note of caution in the area of copyright law and plagiarism in our new age of GenAI. My view is that intellectual property must be respected in any court decisions regarding media content. Only time will tell what the courts decide.